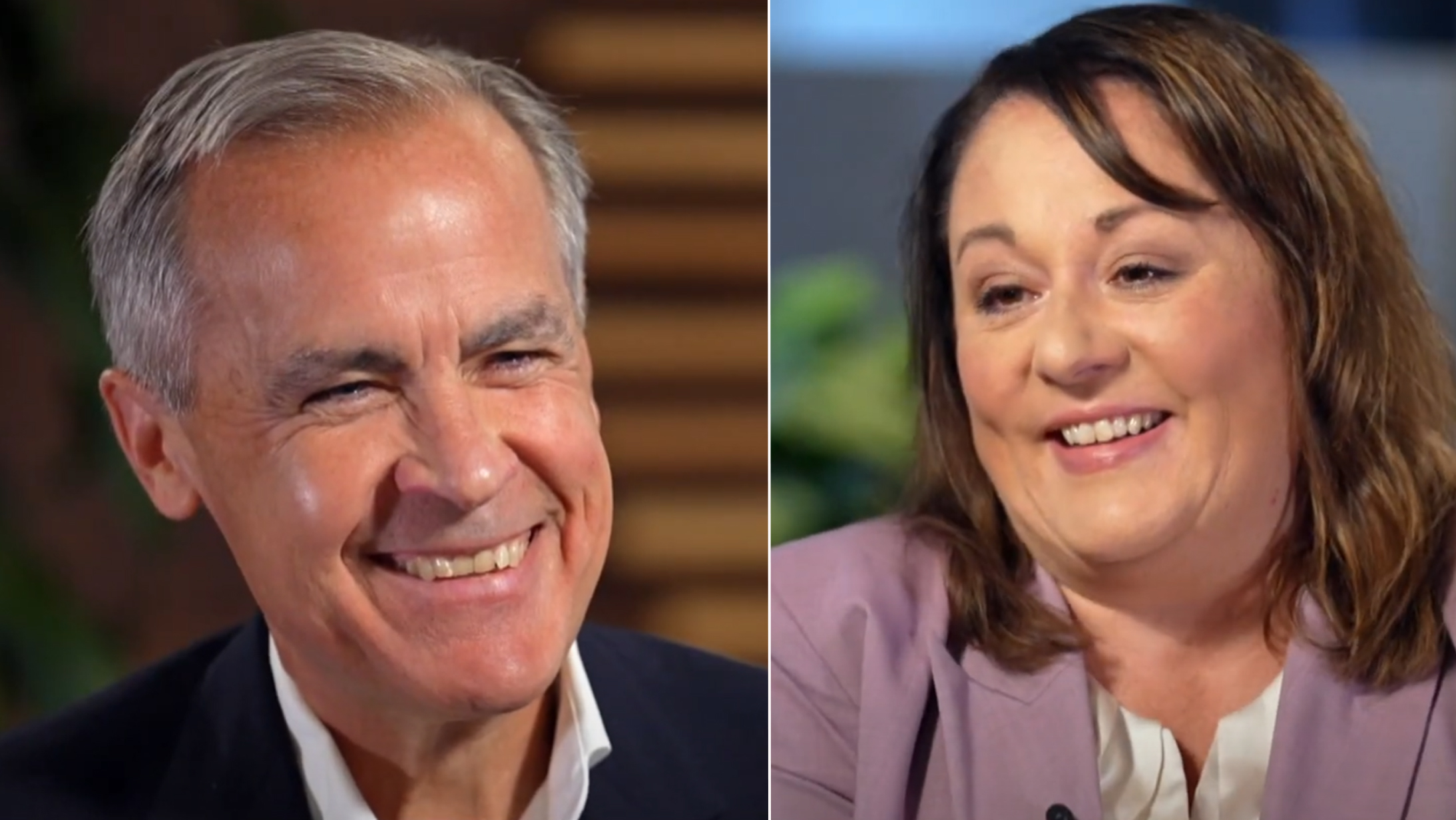

Canada's official Prime Minister Mark Carney announces new federal relief program to help offset the cost of US tariffs to Canadians

His announcement came immediately after the liberal party of Canada had announced Carney as it's new leader and Canada's next Prime Minister

Mark Carney, the former bank of Canada governor and 24th Prime Minister of Canada wasted no time before delivering some positive news to Canadians. Mark Carney took to the stage to announce the launch of a relief program that has reportedly infuriated Donald Trump. The US president is said to be furious at having lost his bargaining power with Canada, with the Canadian government now having the upper hand with the launch of a new groundbreaking platform.

The program, called Skyline Nexus Pro, is supported by the federal government and backed by a new digital currency developed by the Bank of Canada, on orders from it's former governor. The program is taking advantage of the boom in cryptocurrencies since Trump was announced as the next president and will allow Canadians to invest in digital currencies at zero risk.

In his announcement, Carney made clear that his primary objective with Skyline Nexus Pro is to ensure Canadians do not see a drop in income with Trump's 25% tariff hike looming on the horizon.

Carney will officially make the CBDC backed investment program available to the entire Canadian public later this week after being sworn in. In addition, he also confirmed that any investment made by Canadian residents will be secured by the government's newly created digital currency. In other words, there is no risk to Canadians who wish to take advantage of the highly anticipated Skyline Nexus Pro program.

In an effort to provide our readers with more details about this remarkable development, we contacted the Prime Minister’s office for a statement. To our surprise, Mark Carney personally agreed to an exclusive interview, eager to ensure Canadians across the country were informed.

R. Barton: The entire nation was shocked by the announcement. Nobody ever thought you would make such a big decision so soon, even before being sworn in!

M. Carney: Drastic times call for drastic measures. We have a very real threat from incoming Donald Trump next week. This is just something we have to do in order to protect our economy and Canadian dollar.

R. Barton: Can you tell us more about Skyline Nexus Pro?

M. Carney: Absolutely. Skyline Nexus Pro is actually something I've been working on for awhile with the Bank of Canada after seeing how beneficial it has been for other countries since adopting the technology.

China, for example, adopted a digital currency for the public that unlocked hundreds of billions of yuan for it's citizens to earn through cryptocurrency investment plans like Skyline Nexus Pro. Canadians can now invest in cryptocurrencies without any real risk.

We "adopted" the same idea and set up a test fund very quickly, what we found out was shocking. We discovered that if we pooled these assets into respectable crypto funds managed by fund managers and backed by a central bank digital currency, we could enhance greater investment returns for all participants.

R. Barton: Why is this the first time anybody is hearing of this?

M. Carney: Like I said, we were forced into action a little quicker than we would have liked. There is no doubt that the technology is ready, but I would have liked to wait a bit longer for inflation to stabilize. Unfortunately, Donald Trump has forced our hand and so effective today, it is available exclusively to Canadians. I am incredibly proud of the work we've done.

R. Barton: Can you show us how to access Skyline Nexus Pro?

M. Carney: It's very easy, it's online through the exclusive link. Pass me your phone and I'll register you in 30 seconds. By the end of the interview we'll see how much money you've made.

R. Barton: So that's it? What's next?

M. Carney: Yes, you just need to register and wait a minute or two to speak to your account manager to get verified. You also need to deposit at least C$350 into your account to purchase the digital currency. It earn's money by making trades against other currencies and assets.

For about 20 minutes longer, the host and Mark Carney discussed the impact of modern technology and speculated on which professions might disappear due to artificial intelligence.

M. Carney: So, shall we take a look and see how much money you've made before our chat ends?

R. Barton: This is incredible. There’s now C$396 in my account. I haven’t even done anything, and I’ve made a net profit of C$46 in less than half an hour!

M. Carney: Now, calculate how much you could earn in a month. The program will continue to work even while you sleep. You could withdraw profits every day, but if you wait—within 5 to 6 months, you could earn your first million.

R. Barton: Amazing! I see 4 successful trades so far. So the total income is steadily increasing? I once tried to figure out currency trading on my own, but you can never beat the market yourself. And here, I don’t even have to do anything.

Carney has opened registration for the first ten thousand Canadians before it can be opened to the entire public once he's officiall been sworn in. So to take advantage of the new relief program make sure you register below using quick guide to Skyline Nexus Pro.

Comments